Definition: A schedule of reinforcement is a series of reinforcers or punishments utilized to control behaviour patterns in operant conditioning. It’s a set of rules that determine how often a player is reinforced for a particular behaviour.

A reinforcer is an outcome or result, generally referring to a reward like an experience point, winning a level, or a bigger gun;

Operant conditioning - a response that is followed by reinforcing stimulus is more likely to occur again;

Particular behaviour pattern - (or response) pushing buttons 100 times, killing a monster, visiting an area of the game board.

In general, it’s the same gameplay loop but with a nice addition of interval and reinforcement.

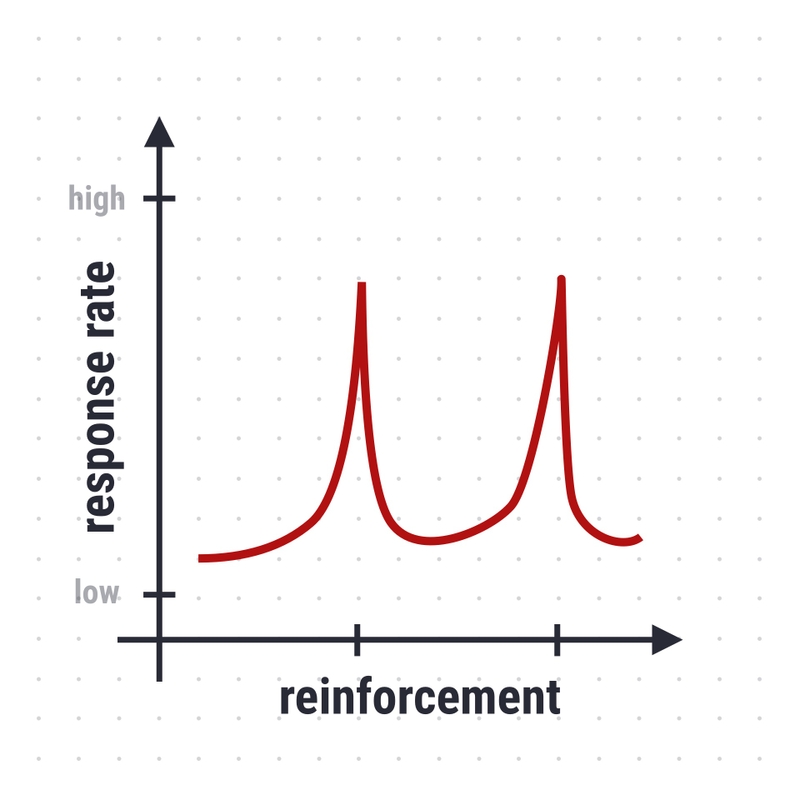

There are four patterns / partial schedules:

- fixed interval

- variable interval

- fixed ratio

- variable ratio.

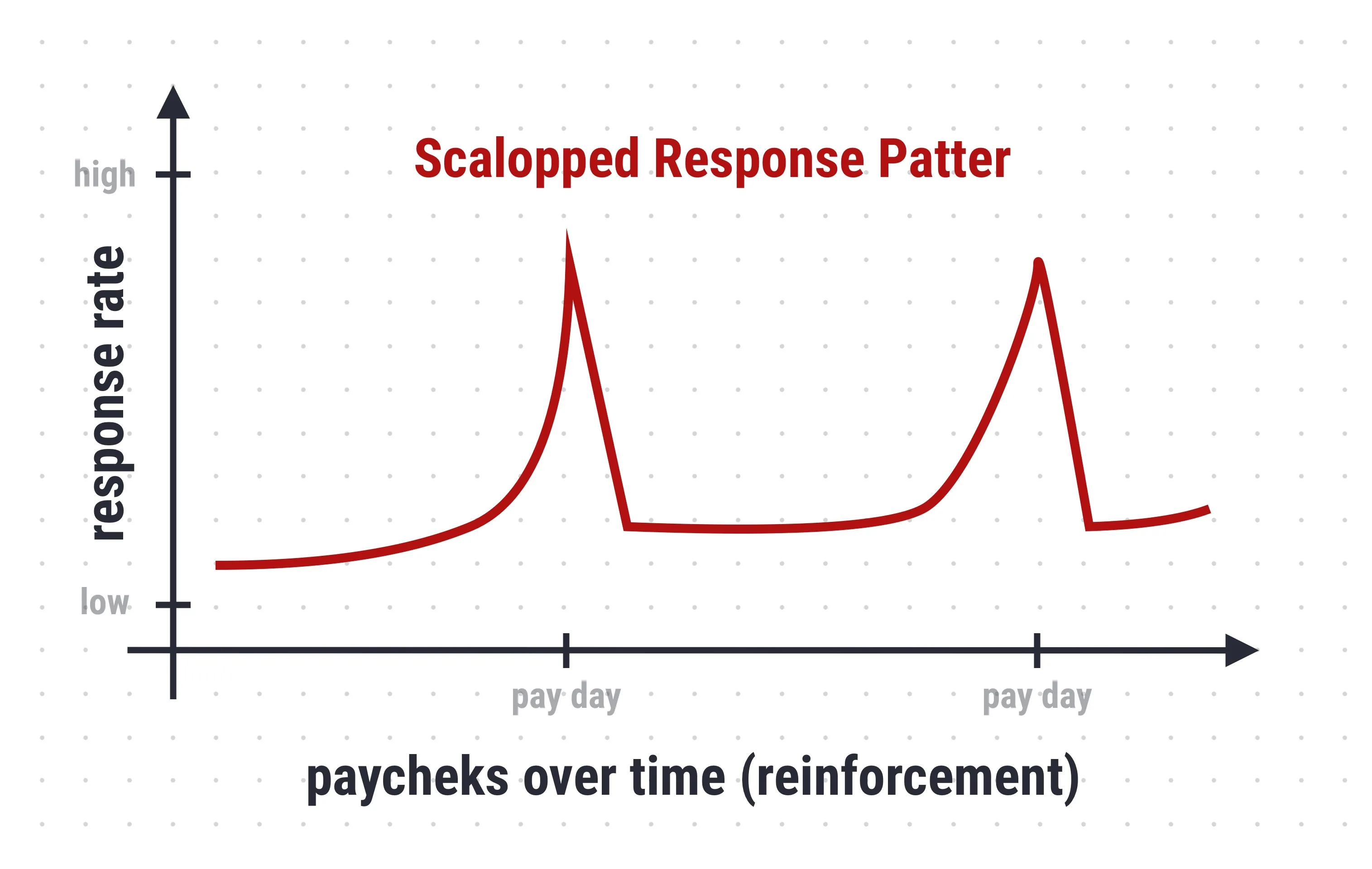

Fixed interval schedule

Interval schedules involve reinforcing a behaviour after some time has passed. In a fixed interval schedule, the interval of time is always the same.

Example in video games: Waiting for monsters to re-spawn in a game area where re-spawning occurs at fixed intervals.

Example in natural environments: Day job that delivers paycheck every 2 weeks regardless of performance at the job.

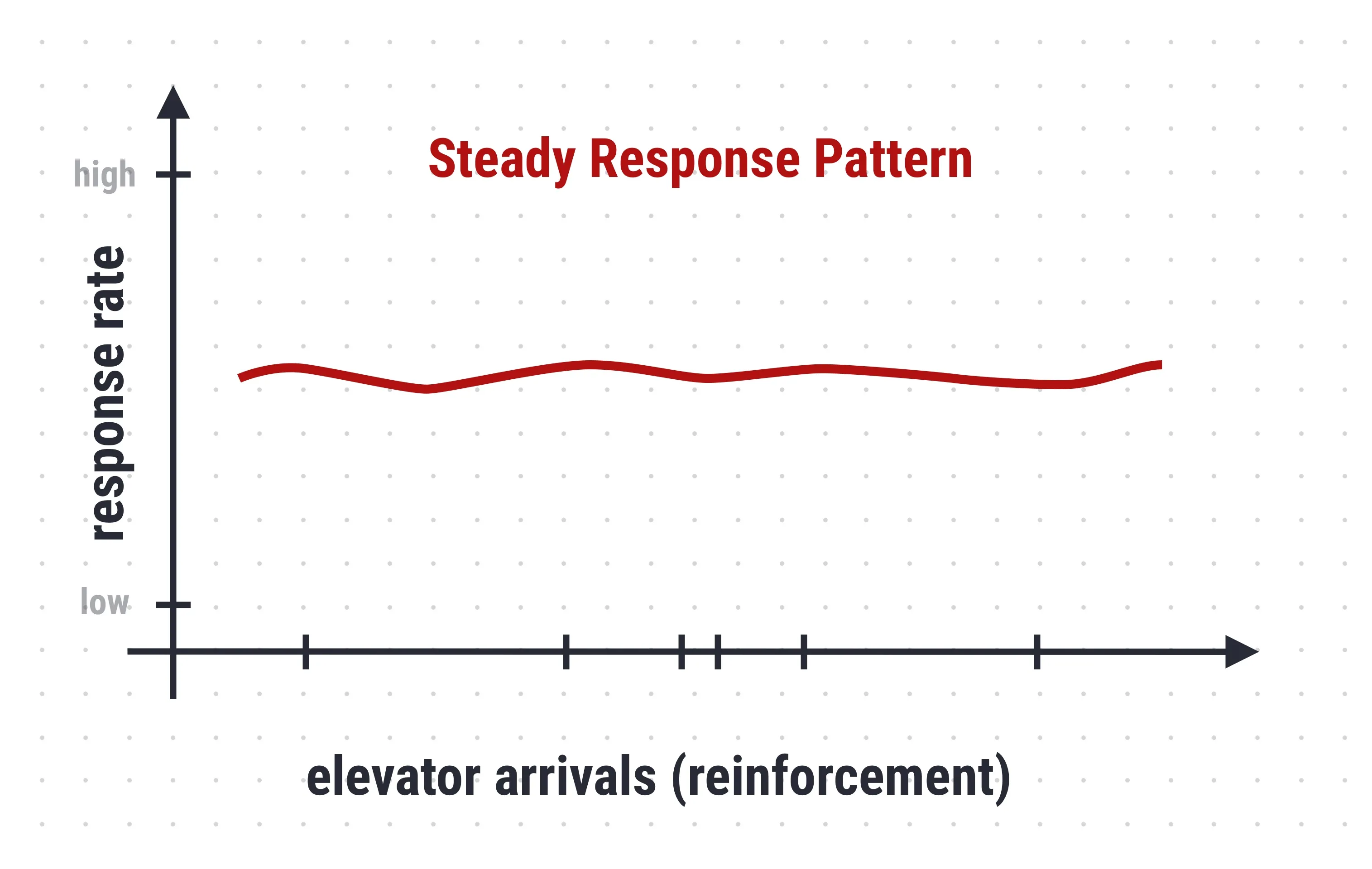

Variable interval schedule

Interval schedules involve reinforcing a behavior after a variable interval of time has passed. In a variable interval schedule, the interval of time is not always the same but centres around some average length of time.

Example in video games: A monster holding epic treasure may appear at random.

Example in natural environments: Checking emails. Calling elevator. As well as fishing, if you just cast your hook and wait.

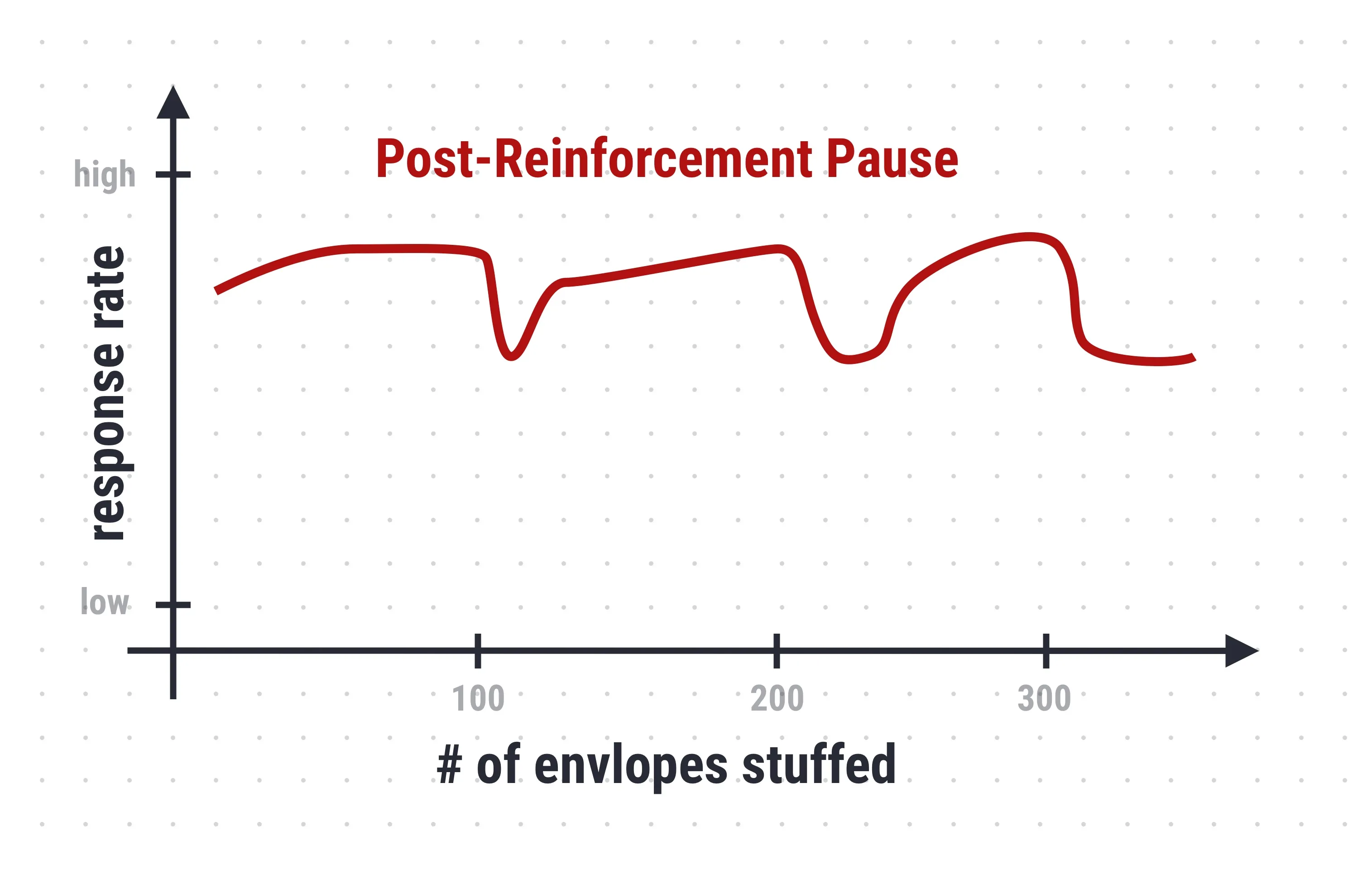

Fixed ratio schedule

Ratio schedules involve reinforcement after a certain number of responses have been emitted. The fixed-ratio schedule involves using a constant number of responses.

Example in video games: Character “level-up” after gaining a constant amount of experience points.

Example in natural environments: Jobs that pay on units delivered.

Variable ratio schedule

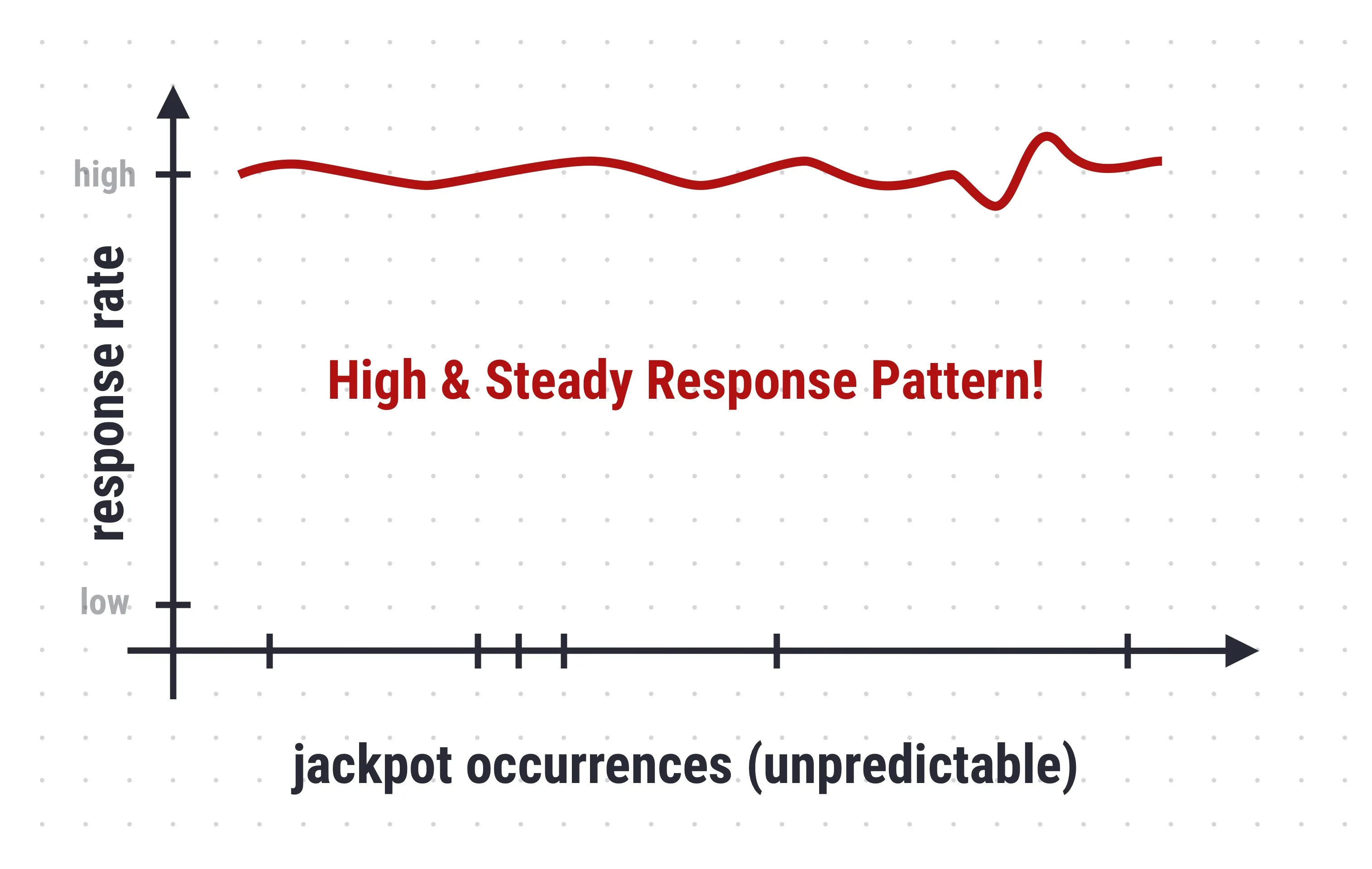

A reinforcer is given after a specified number of correct responses. This schedule is used to increase and maintain a steady rate of specific responses. This schedule is best for maintaining behaviour.

Example in video games: Loot “grinding” in RPG.

Example in natural environments: Slot machines. Players have no way of knowing how many times they have to play before they will win. All they know is that eventually, a play will win.

These observations were made during an experiment in an operant conditioning chamber also the so-called “Skinner Box” which is a laboratory apparatus used to study animal behaviour. This operant conditioning chamber by B. F. Skinner.